From protocol logic to defensible, review-ready decision models — locked and verified at every stage.

Method-focused, not outcome-judging — we make decision logic inspectable and testable.

We turn complex methods, protocols, and care pathways into transparent decision models and executable code.

Each stage includes a clear approval gate so logic stays consistent, scope stays controlled, and decisions remain explainable under review.

Not a CRO. Not a biostats service. We formalize decision logic.

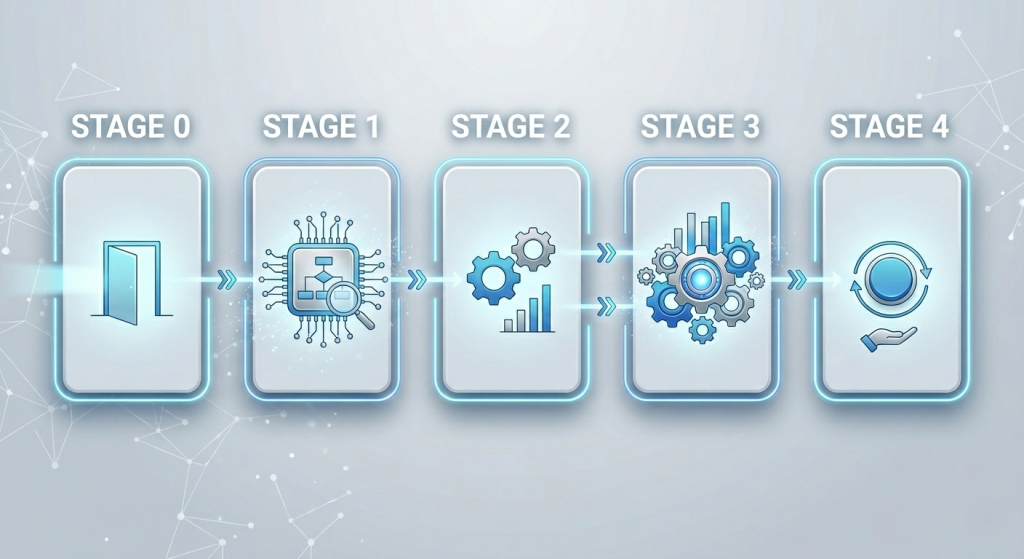

The 5-stage path (at a glance)

Stage-0 is the entry gate. Stages 1–3 are the core build. Stage 4 is optional execution support.

Stage-0 and audits test decision logic—not outcomes

The golden rule of the method-to-model process

We never start coding or simulation work without a reviewed and approved decision definition and method.

Stage-1 (Logic Lock & Architecture) is mandatory for every engagement.

Without a model-ready decision definition, any downstream modeling becomes fragile and hard to defend.

“What decision are you trying to defend — and what’s the lowest-effort path to get there?”

Before any commitment, we assess modeling potential using a short, non-sensitive summary (no NDA required).

You receive a written verdict and the minimum next step.

Result: fast clarity on whether your decision is model-ready — before you commit time, budget, or data.

You send

- 5–10 lines describing the decision you’re facing

- A non-sensitive summary of the method/protocol/pathway (high level)

You receive (within ~1 business day)

- Verdict:

- Modelable as-is

- Modelable with changes (what must be clarified/locked)

- Not a fit now (brief rationale)

- Decision routing: recommendation of the best-fit module(s) for your decision gate

- Minimum Inputs List: the minimum information needed to begin Stage 1

Cost: Free | Privacy: No NDA | Turnaround: ~24 hours

“Turn text into explicit, reviewable decision logic.”

This is the most important stage: we extract hidden assumptions and convert the method into a decision-grade architecture that can be reviewed and approved before formalization or code.

Privacy

NDA is included in the Stage 1 intake form.

Deliverables

(Stage 1 outputs)

Assumptions & Fragility Register (MRR)

Explicit assumptions, tagged, reviewable (what the decision is betting on)

Model Architecture (inputs → states → outputs)

The official decision structure, not a narrative

Input Spec Sheet (ISS)

Variables, units, timing, ranges, and constraints

Scenario Pack v1 (definition-level)

Baseline + stress scenarios, parameter ranges, evaluation metrics

Verification Plan (key differentiator)

How we will prove in Stage 3 that the delivered code matches this architecture (traceability requirements + acceptance tests + version-lock rules).

.

Use-Case Confirmation Lock (Scope Lock)

the chosen use case + clear scope boundaries + success criteria

Deliverables are inspectable artifacts (maps, contracts, and testable assumptions)—not slides.

Result

a review-ready decision architecture you can circulate internally or under external scrutiny

Approval gate (Stage 1)

We proceed only after the Architecture + Scenario Pack v1 + Verification Plan are approved.

“Convert the locked architecture into formal, implementation-ready mathematics.”

We translate the locked architecture into explicit equations and formalize the input/output definitions as an official schema so the computation cannot drift during implementation.

Deliverables

(Stage 2 outputs)

- Formula Pack: Equations, parameters, constraints, boundary/initial conditions

- I/O Contract (Official Schema): Types, shapes, allowed ranges, file structures, timing alignment

- Scenario Pack v2 (formal configs): Formal scenario configurations aligned with the contract

Approval gate (Stage 2)

We move to coding only after the Formula Pack + I/O Contract are approved.

“Deliver a reproducible decision model — and prove it matches the locked logic.”

We implement the approved formulas as a professional Python package and deliver verification evidence that the code matches the locked Stage-1 architecture and assumptions (inspectable, testable).

Inputs

(Stage 3 requirements)

- A sample dataset (synthetic or de-identified is usually sufficient)

- If you plan to run locally: your compute specs (OS/CPU/RAM/GPU constraints)

Deliverables

(Stage 3 outputs)

- Python Code Package / Repo (versioned): Structured, documented, reusable

- Runbook + Config Templates: Install/run instructions + scenario configs

- Sample Run Outputs: Validated example run on your data format + output schema

- Verification Evidence (“Proof of Match”)

- Completed traceability matrix (Architecture → Code)

- Acceptance tests proving code implements the locked architecture

- Scenario equivalence checks (Scenario Pack ↔ Code runs)

- Version-lock rules and reproducibility notes

Result

You receive a long-term digital decision asset — not just code, but a traceable decision engine you can rerun and defend when reviewers, sponsors, partners, or auditors ask how the decision was made.

“We run it for you—securely—and deliver decision-ready outputs.”

Execution support only. The underlying decision logic remains the locked Stage-1 model (no outcome tuning).

If you prefer to focus on interpretation and decision-making, we execute the model on your sensitive data under NDA and deliver decision-ready outputs.

Deliverables

(Stage 4 outputs)

- Simulation results (raw outputs + structured result files)

- Final Decision Notes (1–2 pages)

Concise interpretation linked back to Stage-1 assumptions and architecture - Run Certificate

Code version + configuration used (audit trail)

Scope clarity

Managed runs are scoped upfront (datasets, scenario count, iterations), with clear pricing tied to that scope.

What makes this process different

- Locks before code: architecture and assumptions are approved before formalization and implementation

- Approval gates: each stage has a clear “go/no-go” checkpoint

- Verification evidence: code is delivered with proof it matches the locked logic

- Versioned artifacts: LaTeX/PDF + structured configs + reproducible runs

- Scope discipline: scenario add-ons vs scope extensions are handled explicitly

- Outcome-neutral: we test decision logic, not outcomes

Approvals, Change Requests, and Versioning

To keep every Method-to-Model project traceable and defensible, we treat the work like a reviewable product—not a loose set of files.

Every major decision artifact (MRR, Architecture, Formula Pack, Code Package) is versioned and frozen when approved, so later choices can be defended.

Versioned deliverables

Each major deliverable has a clear version number (e.g., MRR, Model Architecture, Formula Pack, Code Package). This makes discussions, reviews, and revisions unambiguous—everyone knows exactly which version is being referenced.

Freeze after approval

When you approve a deliverable, it is marked as frozen. Future work builds on that frozen version, so the project stays consistent and the logic—and the decisions derived from it—don’t drift over time.

See an example: Stage-1 record with versioned PDFs (Zenodo)

Change Requests (CR)

If something substantial needs to change after a deliverable is frozen, we log it as a Change Request with a clear description of what changes, why it changes, and the expected time/cost impact—so scope stays controlled and previously approved decisions stay defensible.

“Before a deliverable is frozen, revisions are part of the normal stage loop. Change Requests apply only after approval/freeze.”

Data, IP, and Privacy

We design the workflow to minimize sensitive exposure while keeping results usable for real research decisions.

Data minimization by default

Whenever possible, we work with de-identified, aggregated, or synthetic data. Identifiable patient data is rarely necessary for the types of modeling, simulation, and consistency checks we deliver.

NDA when appropriate

We do not require an NDA for Stage-0. If you choose to move forward, NDAs and data-processing terms can be put in place starting Stage-1, when sensitive materials may be shared.

Client-first privacy

By default, your materials, model logic, and outputs remain private to you. We do not publish or share anything publicly unless you provide explicit written permission and the content has been sanitized appropriately.

Policies

If you need more detail on data handling, security expectations, and publication rules, please see our Policies page.

“We avoid identifiable data by default. If sensitive data is required for managed runs, it happens only in Stage 4 under NDA/DPA.”

Start here

Most engagements begin with a quick Stage-0 review.

Stage-0 and audits test decision logic—not outcomes.