De-risk protocol lock—before the first drift pulls you into an amendment cycle.

You own the product strategy. We protect trial interpretability and decision integrity.

We stress-test protocol, sample size, regimen, and transferability so decisions hold up under real-world drift—before budget and timelines are locked.

Not a CRO. Not a biostats vendor. We stress-test the decisions your protocol depends on.

~1 business day • no sensitive data required • we test decision logic, not outcomes

Most milestone slips don’t start with biology. They start with fragile assumptions.

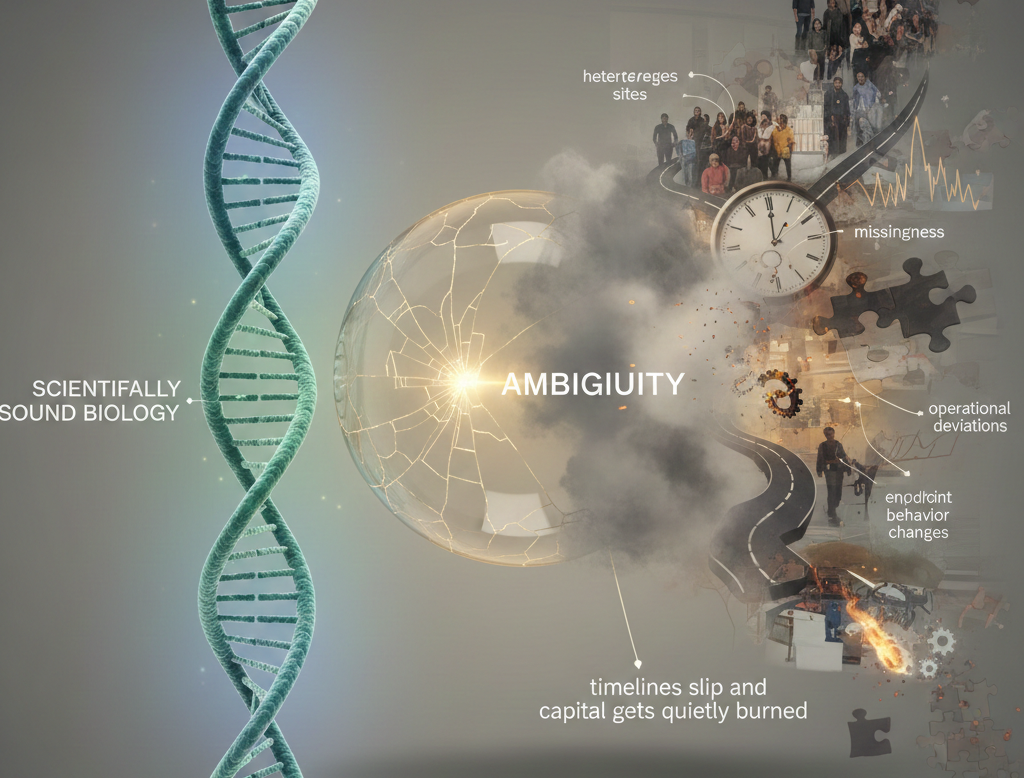

A protocol can be “scientifically sound” and still miss its milestone because the real world adds variance: heterogeneous sites, missingness, adherence drift, operational deviations, endpoint behavior changes.

If these aren’t stress-tested upfront, the program doesn’t necessarily fail cleanly—it becomes ambiguous—and ambiguity is where timelines slip and capital gets quietly burned.

If you don’t fix this (cost bullets)

- You lock a protocol that looks fine on paper—then recruitment starts and changes become expensive (or impossible).

- Power realism breaks under missingness, site variance, and heterogeneous response → diluted signal.

- You get pulled into an amendment cycle that burns timeline and sponsor confidence.

- You end up with a readout that’s hard to interpret → wrong go/no-go decision.

What you buy: a defensible decision trail for your milestone

Assumptions / Fragility Register (MRR)

Ranked “what must go right” assumptions tied to milestone exposure.

Scenario Pack (Drift Stress Tests)

Focused what-ifs: missingness, adherence, heterogeneity, site effects, operational variance.

Milestone Decision Note

Forwardable decision memo (IC / internal review ready): minimum fix set, trade-offs, and thresholds that change the decision.

Artifacts are inspectable (LaTeX/PDF), versioned, and reviewable—not slide decks or black-box outputs.

Audit tiers (pick the depth you need)

+ Protocol Blind-Spot Scan

+ Real-World Power Check (lite: variance + missingness + effective N)

Best for:

When you’re close to protocol lock and need a fast, decision-grade view of what breaks first—before early drift pushes you into an amendment cycle.

Outputs:

- Assumptions & Fragility Register (MRR) — lite, 1-page artifact

- Milestone Risk Snapshot — top fragilities + “fix-first” actions (what to change before lock)

Scope:

Top assumptions + first-break analysis + detectability under drift (variance, missingness, effective N).

Typical turnaround:

Fast (days)

Best when you need:

A clean “go / don’t go / fix first” view before sponsor alignment or internal lock.

+ Tier A

+ Regimen Robustness & Dose Decision

+ Transferability & Scaling Check

+ Measurement Decision Design (included when measurement choice is decision-critical)

Best for:

Milestones where the protocol must survive real-world variance (sites, behavior, missingness, heterogeneity)—and you want to lock with confidence, not hope.

Outputs:

- Assumptions & Fragility Register (MRR) (full)

- Assumptions Map (same artifact family—deeper view, not a different thing)

- Milestone Decision Memo (IC / internal review ready)

- Scenario Pack (Drift Stress Tests) — the minimal set of what-ifs that actually change the decision

Includes (standard scenario stress tests):

- Missingness + informative dropout

- Site-to-site variability / measurement drift

- Heterogeneity + effect dilution

Typical turnaround:

1–2 weeks

Best when you need:

You want the protocol to be defensible under drift and avoid mid-study redesign pressure.

+ Tier B

+ Reviewer-Ready Logic Pack (partner/regulatory-facing defensibility)

+ Mid-Study Amendment Decision (pre-plan)

+ Optional: Reusable model asset (documented I/O + scenario engine; code only if needed)

Best for:

High-exposure milestones (phase transitions, partner diligence, pivotal-like programs, or major capital at risk) where the real downside is not failure—it’s an ambiguous readout after major spend.

Outputs:

- Full Assumptions Map + Architecture + Scenario Pack (audit-grade)

- “What changes conviction” thresholds (what would actually flip the decision)

- Partner-ready logic appendix (traceable: claim ↔ endpoint ↔ timing ↔ decision rules)

- Optional reusable asset (documented I/O + scenario engine; code only if needed)

Includes:

Expanded scenario coverage + an audit trail suitable for internal governance and external scrutiny.

Typical turnaround:

Project-based

Best when you need:

The downside isn’t being wrong—it’s becoming ambiguous under scrutiny and variance.

Scope discipline: Stage-0 and audits test decision logic—not outcomes.

What decision are you facing right now?

Pick the gate you’re at today:

Modules (same library, packaged for biotech execution)

- Design • Review • Mid-Study • Post-Readout • Asset

Phase A — Design

Team question:

What breaks first once the protocol meets real sites and real behavior?

If you don’t do it:

you discover feasibility or endpoint fragility after spend starts.

You get:

assumptions register + first-break scenarios + minimum fix set.

Team question:

Are endpoints/biomarkers worth their cost and operational burden?

If you don’t do it:

expensive low-information panels add noise and missingness.

You get:

minimal must-measure set + timing windows + ROI notes.

Team question:

Will the regimen survive adherence drift and exposure variability?

If you don’t do it:

program “fails” because regimen fragility erased signal.

You get:

regimen scenario matrix + robust dose/interval options + defensible rationale.

Team question:

What transfers to the next context (phase, population, sites, real world)—and what breaks?

If you don’t do it:

you scale a result that collapses in the next setting.

You get:

transferability map + context-adjustment scenarios + scale-readiness notes.

Team question:

Will workflow and capacity constraints distort outcomes at scale?

If you don’t do it:

ops variance dominates measured effects.

You get:

bottleneck map + drop-off points + intervention what-ifs.

Not sure which block fits your situation?

Stage-0 helps you identify which decision risks actually matter before you invest further. You get a short written verdict — modelable as-is, modelable with changes, or not a fit right now — plus the lowest-effort next step.

Phase B — Review / Partner diligence

Team question:

Can we defend design logic under partner/regulatory scrutiny?

If you don’t do it:

rewrite cycles and delays hit your milestone timeline.

You get:

defensibility pack + assumptions log + claim↔endpoint alignment.

Phase C — Mid-Study

Team question:

What adjustment reduces risk without invalidating interpretability?

If you don’t do it:

amendment pressure leads to un-interpretable readout.

You get:

constrained scenarios + low-risk options + decision rationale note.

Guardrail:

We don’t replace ethics/regulatory oversight—scenario transparency only

Phase D — Post-Readout

Team question:

Null because biology failed—or because design drifted?

If you don’t do it:

you kill a viable program or fund the wrong v2.

You get:

divergence map + next-step options + test plan.

Team question:

Would this hold if rerun, audited, or reanalyzed?

If you don’t do it:

confidence collapses under scrutiny.

You get:

robustness metrics + sensitivity notes + audit-ready summary.

Team question:

What unmodeled factor likely shaped the readout?

If you don’t do it:

the next study repeats the same blind spot at higher burn.

You get:

candidate confounders + testable hypotheses plan.

Phase E — Asset

Inspect our math

Public, versioned artifacts—reviewable by peers.

De-identified examples on Zenodo and accompanying GitHub repos. Outputs are delivered in research-grade formats (LaTeX/PDF) suitable for citation and review—not marketing decks.

Enter Stage-0 (Team Intake)

Stage-0 is the first gate in our process. We review protocol logic and assumptions only—no outcome optimization.

You’ll get a short written verdict in ~1 business day: modelable as-is, modelable with changes, or not a fit right now, plus the lowest-effort next step.

Form fields (minimal):

- Team / role (Clinical Dev, Translational, Biostats, PM, BD)

- Decision gate (protocol lock / N&budget / regimen / diligence / mid-study / readout / asset)

- Short protocol synopsis (non-sensitive)

No sensitive data required for Stage-0.